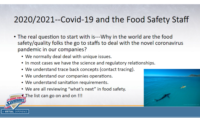

The safety of the food supply appropriately remains a high priority for industry stakeholders, regulatory agencies and consumers. With the emergence of new safety challenges and issues, companies are establishing and upgrading programs to reduce risk factors. These programs are continuously monitored for reliability and effectiveness. Due to the health and safety risks posed by chemical, microbiological and environmental contaminants, analytical methods are increasingly becoming a centerpiece of food safety programs.

Innovative analytical approaches are being developed in response to emerging food safety issues. Established, officially approved methods are used to monitor for known issues. Oftentimes, new analytical methods are developed or modified rapidly in response to issues, such as melamine contamination, which are unforeseen. In such instances, accurate data derived from sound, validated analytical methods are required to enable industry stakeholders and regulators to make sound scientific decisions.

Through research, industry has improved a host of analytical methods in recent years, resulting in higher sensitivity for difficult-to-detect contaminants, detection of contaminants in new matrix classes and faster turnaround times for results. These advances have been made in the face of changing regulations, more rigorous method-validation standards and consumer demands for safe, quality products. Going forward, this spirit of innovation will continue to be crucial in industry’s efforts to ensure the safety of the global food chain.

Microbial Contamination

When you hear “food safety,” there is a natural tendency to think initially of microbiological issues. Over the past few decades, foodborne illnesses associated with Salmonella, Campylobacter, Listeria monocytogenes and Escherichia coli O157:H7 have been hammered into the public consciousness. In 2013 alone, significant news and media coverage were devoted to the discovery of L. monocytogenes in salads and Salmonella in peanut butter.

Among the top causes of product recalls nationwide, Salmonella is the number one pathogen of concern in the U.S., causing over 19,000 hospitalizations each year, according to the U.S. Centers for Disease Control and Prevention statistics. Salmonella contamination is most commonly associated with eggs, poultry, meat, unpasteurized milk or juice, cheese, raw fruits and vegetables, spices and nuts.

Chemical Contamination

Public awareness of chemical safety issues is quickly gaining ground. Recent events have been captured in news headlines, highlighting the diverse safety and analytical challenges posed by chemical contaminants.

After years of consideration, the U.S. Food and Drug Administration (FDA) proposed an action level of 10 parts per billion this summer for inorganic arsenic in apple juice. For two decades, FDA has monitored apple juice for arsenic and other contaminants through its Total Diet Study and the Toxic Elements in Food and Foodware, and Radionuclides in Food Program. The results of the latest FDA survey on apple juice indicated that the majority of samples were below the action level for inorganic arsenic.[1]

Higher arsenic levels in juice products have been reported in previous studies. An action level and risk assessment for arsenic in rice and rice-based products might be the next step, and FDA has recently released analytical test results, indicating that levels found were too low to cause “immediate or short-term health effects.” However, FDA will next assess the potential health risk from long-term exposure to arsenic in rice and rice-based products.[2]

Acrylamide has long been a part of the human diet, commonly found in industrial settings and used in a wide range of products. Acrylamide formation is particularly likely in potatoes and cereals and other carbohydrate-rich foods, approximately 40 percent of the American diet. Due to the endogenous matrix interferences inherent to starchy foods, the analysis of acrylamide presents numerous challenges.

This past summer, dicyandiamide, an agricultural chemical used by New Zealand dairy farmers to promote grass growth and reduce soil nitrogen leaching, was detected in minute traces in certain New Zealand milk powders.[3] The issue was isolated to a small number of manufacturing plants and products. Food safety authorities significantly boosted surveillance to prevent any further incidents, and a number of laboratories worldwide developed methodologies utilizing sophisticated analytical techniques to address the issue.

Building an arsenal of reliable and validated methods to meet today’s and tomorrow’s challenges rests on three essential elements: consensus, continuous evolution and new processes.

Building Consensus

The 2007 melamine crisis, which significantly impacted the pet food, feed and infant formula industries, is an ideal example for building a process to quickly achieve a scientific consensus in analytical methods. When melamine and related compounds were initially discovered in various food sources, cascading events led to investigations both in the U.S. and abroad. Government bodies and laboratories around the globe developed methods, collected data and dutifully reported their findings. However, analytical techniques, range-of-detection limits, sampling programs and other important factors varied in the development of these methods.[4]

At a December 2008 meeting of the World Health Organization (WHO), food safety experts set forth melamine thresholds that government bodies would be able to adopt. Canada was the first country to adopt tolerable daily intake guidelines matching those set by the WHO and set a standard for allowable melamine levels in infant formula.[5]

While we have learned a great deal from this unfortunate event, it was plainly evident that industry could and should do more to handle the next crisis. For example, the formation of an emergency response consensus method group could be highly beneficial. Joining with recognized global agencies, such as the International Food Safety Authorities Network, the group would be empowered to establish a process, allowing experts to organize a forum, agree on a method and quickly standardize the selected method to offset the impact of a crisis.

AOAC International has established a Priority Response Working Group as part of its Stakeholder Panel on Strategic Food Analytical Methods. The objective of this is to develop a proposal on establishing a consensus method in response to an emergency situation.

Evolving to Meet Changing Needs

When recalls and tragedies occur, beyond their significant economic impact on food companies, the trust of the consumer is compromised. The industrial microbiology market is driven by public concerns, increasing regulations and the need for faster results and meaningful data.

According to Food Micro—Fifth Edition: Microbiology Testing in the US Food Industry, over 200 million microbiology tests were collected in the U.S. food processing industry in 2010. Published by Strategic Consulting, the 2012 report covers released research findings conducted with more than 100 food processing plants producing a broad range of products in different food segments.

Microbiology testing will continue to move toward ever-more rapid and sensitive methods and techniques. As an example, the industry has moved toward increasing sample sizes, from 25 g to 375 g. A larger sample size increases sensitivity, increases the likelihood of finding bacterial concerns and ultimately decreases risk.

The need for meaningful and actionable results has led to rapid methods, revolutionizing the industry. As part of this period of transformation, the food industry may look to existing technologies and applications in neighboring industries. Real-time polymerase chain reaction brought us more powerful and sensitive techniques, allowing industry to quantitate DNA sequences through this breakthrough technology. The microbiological testing evolution will likely include continued gains in speed, as well as in the depth of the analysis, due to the ongoing efforts of research laboratories and leading diagnostic testing companies.

Chemical analysis is an important component in many quality and safety programs. A few of the more prevalent issues of concern include pesticides, toxins, veterinary drugs, heavy metals, allergens, economic adulterants and environmental pollutants.

Instrumentation such as liquid chromatography-tandem mass spectrometry (LC-MS/MS) and gas chromatography-MS/MS has given us the ability to detect chemical contaminants at very low concentrations, improving confidence in the food supply. To improve the quality of surveillance, industry has developed a number of multiresidue methods, wherein multiple substances can be analyzed simultaneously. However, there are drawbacks in that some residues and metabolites may not be included in the screens. Furthermore, due to different extraction protocols, the same method cannot always be used for all matrices.

As the evolution in contaminant screens continues to advance the scope and depth of these methods, the ability to more completely monitor the food supply for known contaminants will improve.

New Directions

Unwanted or undeclared ingredients can find their way into foods either intentionally or by accident. For these occurrences, targeted testing can’t directly identify the issue and will only be useful once the chemical compound is known. Improvements in analytical instrumentation and data analysis software are allowing the development of nontargeted testing protocols to create a “fingerprint” of an ingredient (or food). Data from the subsequent testing of new lots of ingredients (or new productions of foods) are compared with the base fingerprints, with “high degrees of difference” raising warnings. A high degree of difference would prompt deeper examinations to assess the ingredient or food for possible contamination.

Various technologies are being explored for nontargeted screening. A recent example employed LC-MS/MS coupled with principal component analysis (PCA) to recognize adulterated and unadulterated foods.[6] After extraction of polar and nonpolar components of a variety of food ingredients, the two classes of components were analyzed using general separations on a C18 column with MS/MS detection. PCA identified the spiked ingredients as containing a “high degree of difference.” The MS data allowed identification of the adulterating agents.

Other technologies have also been shown to identify potentially adulterated materials. One example uses nuclear magnetic resonance.[7] Near-infrared analysis with chemometric data analysis is being used in the food industry as a quality assurance tool, and this technology has been expanded to monitor for food adulteration.

Once Beyond Our Grasp

The availability of more sensitive, accurate and rapid testing methods is enhancing testing efficiencies, improving food safety programs and helping create a safer food supply. Advancements in technology are allowing industry to meet many analytical challenges in food product testing that were beyond our grasp not too long ago. The future holds much excitement, raising the bar for industry stakeholders to stay abreast of these new technologies.

John Szpylka, Ph.D., is the director of chemistry, North America, with Silliker Inc.

Xochitl Javier is the senior product manager with Silliker Inc.

References

1. www.fda.gov/Food/GuidanceRegulation/GuidanceDocumentsRegulatoryInformation/ChemicalContaminantsMetalsNaturalToxinsPesticides/ucm360020.htm.

2. www.fda.gov/food/foodborneillnesscontaminants/metals/ucm319870.htm.

3. gain.fas.usda.gov/Recent%20GAIN%20Publications/New%20Zealand%20Dairy%20Industry%20Grapples%20With%20Product%

20Contaminant%20Issue_Wellington_New%20Zealand_3-14-2013.pdf.

4. www.who.int/foodsafety/fs_management/Melamine_3.pdf.

5. www.hc-sc.gc.ca/fn-an/securit/chem-chim/melamine/qa-melamine-qr-eng.php.

6. Szpylka, J. and J. DeVries. 2010. Approaches of monitoring to assure ingredient authenticity. AOAC International Annual Meeting.

7. Charlton, A.J., et al. 2008. Non-targeted detection of chemical contamination in carbonated soft drinks using NMR spectroscopy, variable selection and chemometrics. Analytical Chimica Acta 618(2):196–201.

p>